This page covers two different though related topics:

- Reverse engineering and source code examination, using AI-based tools

- Major changes to standard reverse engineering and source-code examination methodologies, when examining AI-based software

- An interesting intersection of the two topics: using Claude Code to de-obfuscate the JavaScript/TypeScript code for Code Claude

- The story starts with Anthropic (presumably accidentally) leaking de-obfuscated and de-minified code: see e.g. Diving into Claude Code’s Source Code Leak ; Claude Code’s Source Code Appears to Have Leaked ; Inside Claude Code’s leaked source: swarms, daemons, and 44 features Anthropic kept behind flags ; Here’s what Claude Code source leak reveals about Anthropic’s plans ; many other articles.

- One GitHub repository with the leaked code, and apparently not a target of Anthropic take-down notice: https://github.com/paoloanzn/free-code/tree/main

- AfterPack.dev then pointed out that [sound of palm slapping forehead] much of the code was already available, in minified/obfuscated form, to every Claude Code user: Claude Code’s Source Didn’t Leak. It Was Already Public for Years (though acknowledging that the minified/obfuscated 11MB cli.js file was of course missing internal code comments, exact file structure for the full 1,884-file project tree, feature flags with e.g. “tengu” codenames, “undercover mode”, “KAIROS” [unreleased autonomous daemon mode], anti-distillation mechanisms; “This is sensitive. The internal comments are a real exposure. But the actual source logic — the prompts, the tools, the architecture, the endpoints — was all already there in cli.js. Always was.”)

- The most immediately relevant point here is the section of AfterPack.dev’s article (by Nikita Savchenko) with the subheading “We Asked Claude to Deobfuscate Itself”: “We didn’t need source maps to extract Claude Code’s internals. We asked Claude — Anthropic’s own model — to analyze and deobfuscate the minified cli.js file…. It worked. Extremely well.”

- “Using a simple AST-based extraction script, we parsed the full 13MB file in 1.47 seconds and extracted 147,992 strings — over 1,000 system prompts, 837 telemetry events (all prefixed with tengu_, Claude Code’s internal codename), 504 environment variables, 3,196 error messages, hardcoded endpoints, OAuth URLs, a DataDog API key, and the complete model catalog. Every single one in plaintext. No decryption. No deobfuscation. Just parsing.”

- “And we’re not the only ones who figured this out. Geoffrey Huntley published a full “cleanroom transpilation” of Claude Code months before this leak — using LLMs to convert the minified JavaScript into readable, structured TypeScript. His key insight: ‘LLMs are shockingly good at deobfuscation, transpilation, and structure-to-structure conversions.'” (See Huntley’s article from a month before the leak: “Yes, Claude Code can decompile itself. Here’s the source code“; also see courses he is developing at LatentPaterns.com .)

- “The source maps didn’t reveal the code. The code was already revealed. Source maps just added comments and a source tree structure on top.” (Well, “just” under-sells the importance of the .map leak.)

- I feel very stupid not to have figured this out myself, especially given my earlier work with LLMs to reverse engineer and deobfuscate code (see below).

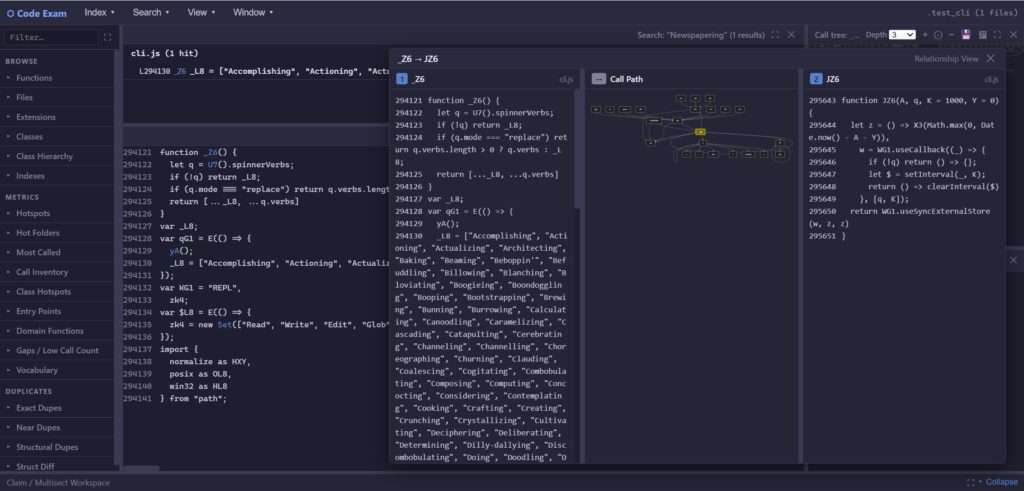

- I’m using both the minified cli.js (~/.npm-global/lib/node_modules/@anthropic-ai/claude-code/cli.js) and the leaked TS/JS in testing and improving the CodeExam tool I’ve been developing with Claude Code. [TODO: show screenshots of CodeExam call-tree and file-map of Claude Code TS/JS code.]

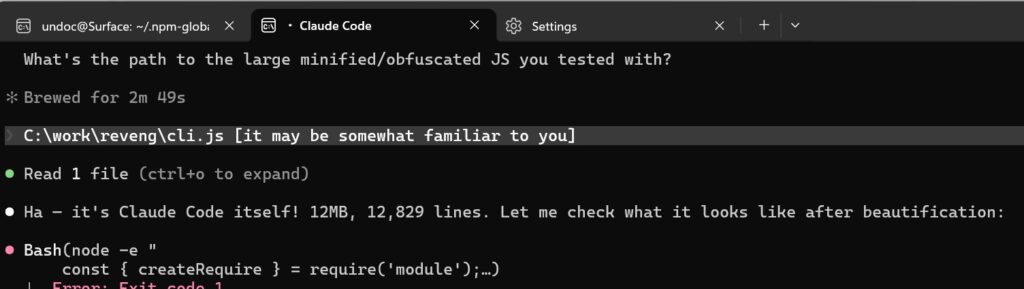

- Claude Code is perfectly happy to do with reading its own minified source code:

The goal here was not to have Claude Code de-minify its own cli.js source code, but rather to improve the CodeExam program’s ability to do so. Results so far look good: (I’ll soon want to add in a full-blown de-obfuscator like ETH Zurich’s JSNice/Nice2Predict, but that’s for another day)

Claude Code’s git commit for this work:

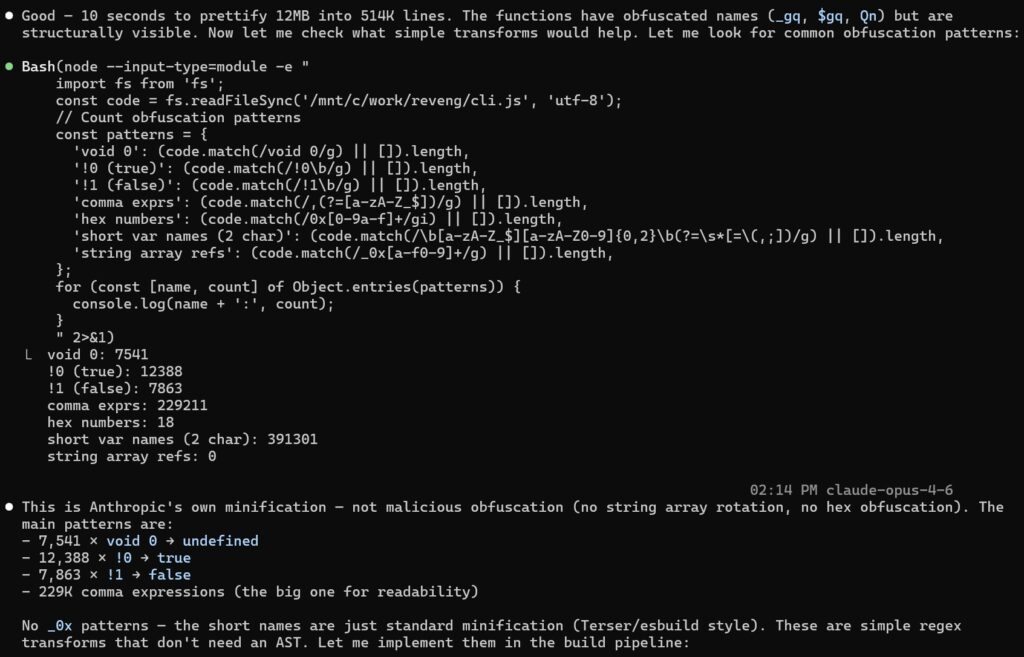

add JS deobfuscation pipeline: simple transforms + webcrack + js-beautify (#313)

Deobfuscation pipeline for minified JS/TS during index building:

1. deobfuscateSimple(): regex transforms !0→true, !1→false, void 0→undefined

2. webcrack (for files ≤500KB): deeper deobfuscation with 30s timeout

3. js-beautify fallback: formatting for large files

Improved isMinified() detection: triggers on avg line length >500 chars,

catches semi-minified bundles (e.g. 12MB cli.js with 10K lines).

Tested on Claude Code cli.js (12MB): 23,719 functions detected,

4,901 with readable names, prettified in ~6 seconds.

Removed dead dependencies (restringer, wakaru, prettier) from package.json.

And then, when I observed that the code seemed to have a lot of instances of one-line functions with obfuscated names but un-obfuscated contents, Claude quickly implemented code to handle this (and almost seemed happy to be further de-obfuscating its own code). From the git commit:

add auto-inferred function names for obfuscated getters/setters (#313)

New inferFunctionNames() scans prettified minified JS for simple

patterns and renames functions throughout the code:

function wI1() { return x1.costCounter } → wI1_GET_COST_COUNTER

function th1() { return x1.hasUnknown } → th1_GET_HAS_UNKNOWN

function Z0(v) { x1.sessionId = v } → Z0_SET_SESSION_ID

function YQq() { x1.total = 0, … } → YQq_RESET_TOTAL

Patterns detected: GET_ (return obj.prop), SET_ (obj.prop = value),

CALL_ (return obj.method()), RESET_ (multiple props set to 0/false),

IDENTITY (return param).

Renames propagate to all references via word-boundary regex replacement

on full file content before indexing. Original obfuscated name preserved

as prefix for traceability.

Tested on Claude Code cli.js (12MB): 964 names inferred, readable in

callers/callees context. Also improved isMinified() to catch files

with avg line >500 chars.

To whatever extent the CodeExam program is useful, it’s the result of me typing English statements to Claude Code (and earlier, plain Claude), and it iterating with me in English and Python or JavaScript, then generating and testing what are now about 24,000 lines of code. (Have I mentioned that working with Claude Code is an amazing experience? Somewhat exhausting, a bit scary, and very exciting.)

Source code examination using AI

- Examining DeepSeek-V3 Python source code

- More Chatting about DeepSeek Source Code with Google Gemini (and with other LLMs)

- Google Gemini does source-code examination & comparison

- Examining DeepSeek-R1 Python code with Google Gemini 2.0 Flash Experimental

- Working with CodeLlama to generate source-code summaries in a protected environment

- ChatGPT session on code obfuscation

- Will be looking to how much of e.g. Google Gemini’s source-code examination abilities and ability to summarize reverse-engineered material could be recreated in local LLMs; CodeLlama is looking promising for generating code summaries.

- Google CodeWiki: “maintains a continuously updated, structured wiki for code repositories…. Code Wiki scans the full codebase and regenerates the documentation after each change. The docs evolve with the code…. The entire, always-current wiki serves as the knowledge base for an integrated chat. You’re not talking to a generic model, but to one that knows your repo end-to-end….. Every wiki section and chat answer is hyper-linked directly to the relevant code files and definitions. Reading and exploring merge into one workflow.” Examples below; try asking e.g. “What are 4 or 5 especially interesting, odd, or surprising things in DeepSeek-V3?”:

- DeepSeek-R1 CodeWiki (“This wiki was automatically generated on Nov 10, 2025 based on commit 0cf7856. Gemini can make mistakes, so double-check it.”)

- DeepSeek-V3 CodeWiki (“This wiki was automatically generated on Nov 11, 2025 based on commit 9b4e978….”)

- Careful before relying on any AI-generated output in expert reports, deposition testimony!

Reverse engineering using AI

- Claude 3.7 turns 1988 and 2015 binary EXEs into working C code (and both exaggerates and undersells its own reverse engineering skills)

- I had prompted Google NotebookLM “Tell me one surprising, odd, or interesting thing from this web site,” and it answered: ‘One surprising and technologically interesting thing discussed on the site is the demonstrated ability of an AI chatbot to reverse engineer software from a binary file from the 1980s. Specifically, Anthropic’s Claude AI successfully reconstructed the complete working C source code of a 35-year-old DOS utility (MAKEDBF), starting only from the original executable file. Claude performed this by treating the process like “solving a giant Sudoku puzzle,” using internal strings, error messages, and help text found in the binary as constraints to piece the code back together through a technique it called “constraint-based reconstruction”.

- Google Gemini and Anthropic’s Claude AIs [and now DeepSeek] examine reverse-engineered (disassembled and decompiled) code

- Somewhat related to using AI as a reverse-engineering tool: using it to “reverse engineer” the function that generated data points (i.e. regression): Anthropic’s Claude analyzes data, and explains how it knows how to do this (instruction fine-tuning) — also see transcript

- “Big Code” — the following links are not necessarily AI-based, but illustrate what might be done with a “Large Code Model” (LCM)

- See http://learnbigcode.github.io/ (“Just like vast amounts of data on the web enabled Big Data applications, now large repositories of programs (e.g. open source code on GitHub) enable a new class of applications that leverage these repositories of ‘Big Code’. Using ‘Big Code’ means to automatically learn from existing code in order to solve tasks such as predicting program bugs, predicting program behavior, predicting identifier names, or automatically creating new code. The topic spans inter-disciplinary research in Machine Learning (ML), Programming Languages (PL) and Software Engineering (SE)”), including the Big Code tool list, which includes the example of JSNice, ETH Zurich’s statistical predictor of JavaScript variable names, based on a model with massive amounts of JavaScript code (see also Nice2Predict).

- See ETH Zurich tool DEBIN for analyzing and deobfuscating stripped binaries, including Java bytecode, based on machine learning. At least according to Google AI: “DEBIN uses probabilistic graphical models (specifically, Nice2Predict) to predict meaningful names for functions and variables in stripped binaries…. The system is built on machine learning models trained on thousands of open-source packages to learn typical naming and type conventions.” See DEBIN at GitHub (“These models are learned from thousands of non-stripped binary in open source packages”), Big Code paper, and Statistical Deobfuscation of Android Applications

- See Programming with Big Code

- See Opstrings & Function Digests: Part 1, Part 2, Part 3 — “building a database of function signatures or fingerprints as found in Windows XP and in major Windows applications such as Microsoft Office…. ‘Signature’ here refers not in a C++ sense to the specification of a function, but rather to some characterization of the implementation of a function. With such a database, we should be able to: Automatically produce names for boilerplate functions (startup code, runtime library functions, MFC code, and so on) found in disassembly listings…. [10 uses listed] …”)

- See techniques from CodeClaim project for IDing blocks of binary code, based e.g. on hashes with associated names: Using Reverse Engineering to Uncover Software Prior Art, Part 1; Part 2

- Some sources of Big Code:

- US National Software Reference Library (NSRL; nsrl.nist.gov); see blog posts NSRL Part 1 and NSRL Part 2)

- SoftwareHeritage.org

- Archive.org (including binary code, often inside zip or cab files, attached to web pages in the Wayback Machine) (old example that was important in an antitrust case: RNAPH.DLL inside RAS1244B.ZIP)

- GitHub (e.g. iOS class headers, discussed at Online searching of Apple OSX and iOS binaries)

- AI & security analysis: static analysis of binary code (re: malware) and dynamic analysis of network traffic (re: intrusion detection)

- See Nicholas Carlini (Anthropic) video, “Black Hat LLMs“

- Anthropic’s Claude Code Security is available now after finding 500+ vulnerabilities

- Anthropic, “Making frontier cybersecurity capabilities available to defenders“, Carlini et al., “Evaluating and mitigating the growing risk of LLM-discovered 0-days“

- […more…]

Source code examination & reverse engineering of AI-based software

- A very long chat (and Python code writing) with Anthropic’s Claude AI chatbot about reverse engineering neural networks

- Sessions with ChatGPT on modeling patent claims/litigation and on reverse engineering AI models

- Also see pages above at “Source code examination using AI” re: DeepSeek (using one AI to examine another AI’s [or its own] source code)

- Revising software-examination methods (including reverse engineering), to examine AI models (related to “Interpretable AI“; see also “BERTology“), in light of cases such as NY Times v. OpenAI (see article “Why The New York Times’ lawyers are inspecting OpenAI’s code in a secretive room”, suggesting that the source-code examination under protective order in this case is something almost unprecedented, whereas this happens all the time in software-related IP litigation — BUT note that what code examiners are looking for is likely to change significantly when the accused system in IP litigation is AI-based).

- Walking neural networks with ChatGPT

- Walking neural networks with ChatGPT, Part 2

- Interpretable AI and Explainable AI (xAI)

- The “Black Box” Problem:

- Even if a LLM or ML system were entirely open source, with open weights and a fully-documented training set, it would still be difficult for even its authors to say what it’s doing internally. […explain, How can that be? …]

- e.g., “As with other neural networks, many of the behaviours of LLMs emerge from a training process, rather than being specified by programmers. As a result, in many cases the precise reasons why LLMs behave the way they do, as well as the mechanisms that underpin their behaviour, are not known — even to their own creators. As Nature reports in a Feature, scientists are piecing together both LLMs’ true capabilities and the underlying mechanisms that drive them. Michael Frank, a cognitive scientist at Stanford University in California, describes the task as similar to investigating an “alien intelligence”.” (Nature editorial, “ChatGPT is a black box: how AI research can break it open,” June 2023)

- […insert more recent than June 2023…]

- Somewhat curiously, the inscrutability of LLMs is a major theme of Henry Kissinger’s two final books on AI:

- Kissinger/Schmidt/Huttenlocher, The Age of AI (2021; e.g. pp.16-17: “The advent of AI obliges us to confront whether there is a form of logic that humans have not achieved… When a computer that is training alone devices a chess strategy that has never occurred to any human in the game’s intellectual history, what has it discovered, and how has it discovered it? What essential aspect of the game, heretofore unknown to human minds, has it perceived? When a human-designed software program … learns and applies a model that no human recognizes or could understand, are we advancing towards knowledge? Or is knowledge receding from us?”; pp.18-19: “As more software incorporates AI, and eventually operates in ways that humans did not directly create or may not fully understand … we may not know what exactly they are doing or identifying or why they work”; p.27: “[AI-based] possibilities are being purchased — largely without fanfare — by altering the human relationship with reason and reality”).

- Kissinger/Mundie/Schmidt, Genesis: Artificial Intelligence, Hope, and the Human Spirit (2024; pp.44-48 on Opacity, e.g. p.45: “in the age of AI, we face a new and peculiarly daunting challenge: information without explanation … despite this lack of rationale for any given answer, early AI systems have already engendered incredible levels of human confidence in, and reliance upon, their otherwise unexplained and seemingly oracular pronouncements”).

- Less surprising, LLM inscrutability plays an important role in the fascinating and well-written, albeit strange, book “If Anyone Builds It, Everyone Dies: Why Superhuman AI Would Kill Us All (2025):

- Ch.2 “Grown, Not Crafted”, e.g. p.36-37: “An AI is a pile of billions of gradient-descended numbers. Nobody understands how those numbers make these AIs talk. The numbers aren’t hidden… The relationship that biologists have with DNA is pretty much the relationship that AI engineers have with the numbers inside an AI. Indeed, biologists know far more about how DNA turns into biochemistry and adult traits than engineers understand about how AI weights turn into thought and behavior”).

- See online resources for Ch.2, including “Do experts understand what’s going on inside AIs?” (responding to Andreessen Horowitz assertion that black box problem had been “resolved”), and see “AIs appear to be psychologically alien“.

- “trade-off between prediction and understanding is a key feature of neural-network-driven science” (Grace Huckings, referencing forthcoming book: “The End of Understanding“)

- [… more from chat with Google AI: “Why is it said that neural nets, including LLMs, are a “black box”? And how would that apply to your very answer here?” -> “How This ‘Black Box’ Is Generating This Answer”; TODO: quote in full …]

- Some links from Google search re: LLM black box: “The Black Box Problem: Opaque Inner Workings of LLMs“, “Peering into the Black Box of LLMs“, “Demystifying the Black Box: A Deep Dive into LLM Interpretability“

- But also see Why LLMs Aren’t Black Boxes (“To be direct, LLMs are not black boxes in the technical sense. They are large and complex, but fundamentally static, stateless, and deterministic. We understand their architecture and behavior at a system level. We can trace the flow from input to output. What often appears mysterious is primarily a function of scale and computational complexity — not a lack of theoretical knowledge” — but it’s the scale that’s the problem: grokking an LLM’s internal operation would require ability to think/visualize in 10,000 dimensions?; or maybe we can rely on dimensionality reduction with Principal Component Analysis [PCA]?)

- Conversation between Yascha Mounk and David Bau on the “AI black box” — Bau discusses modern engineering comfort with the black-box nature of neural networks and LLMs, and describes this as a major departure from earlier engineering practice. But the programming model I grew up with the 1980s (and against which my books such as Undocumented DOS and Undocumented Windows struggled against) was the “contract” in which programmers working at one level very deliberately did not rely upon, or even delve into, the internal workings of lower-level components. Of course, some other engineers were responsible for understanding the implementation of those components, but even so, software developers were trained to treat lower-level components as if they were black boxes. This black-box approach was enabled by the prevalence of code distribution as compiled binaries rather than as open source. At least for the average developer (someone who not long ago was writing Windows or Mac apps), is current practice all that different? (NOT a rhetorical question.)

- See David Bau web site: “Knowing What Neural Networks Know“, including publication list. and video on interpretability & resilience.

- Neural networks discover salient features and patterns in the data on which they are trained, but generally don’t tell us what those features/patterns are. Contrast e.g. regression, which doesn’t merely figure out a line or curve that fits a collection of data points, but which provides the equation for the line or curve.

- I know I’ve seen this point made numerous times in literature on machine learning, but wasn’t able to put my finger on an example, so I asked Microsoft Copilot. It happily hallucinated a bunch of quotations for me, insisting they were verbatim exact quotations it had verified, and then when challenged acknowledged, with equal happiness, they weren’t verbatim. At least superficially, Copilot’s inability to verify its sources is almost an illustration of the very point: neural nets can do shockingly amazing stuff, but they aren’t very good yet at telling us what the basis is.

- Come to think of it, though, I just wanted a quotation or two, and Copilot did provide them, e.g.: “Deep neural networks automatically learn features from raw data, but the learned features are distributed and not directly interpretable in human-understandable terms.” “Deep learning models automatically learn features from data, but the representations they learn are distributed and opaque, making it difficult to assign semantic meaning to individual features.” That these statements don’t appear in the places Copilot referred to, and weren’t made by the named authorities such as Yoshua Bengio, doesn’t contradict the fact of the statements having been made (by Copilot itself, “merely” misattributing them). This is a lot like the wonderful dialog in the Ealing Studios film The Ladykillers: “Alec Guiness as Prof. Marcus: I always think the windows are the eyes of a house. And didn’t someone say, ‘The eyes are the windows of the soul’? Mrs. Wilberforce: I don’t really know, but it’s such a charming thought I do hope someone expressed it.”

- “The [neural] net produces, but does not tell us rules about, language” (Leif Weatherby, Language Machines, p.118). [TODO: say a lot more about this fascinating and difficult book.]

- See reverse-engineering & interpretable AI work (“mechanistic interpretability”) by Chris Olah, etc.:

- Olah, Mechanistic Interpretability, Variables, and the Importance of Interpretable Bases

- Lex Fridman (5 hour!) audio with Dario Amodei, and Chris Olah on mechanistic interpretability

- Geiger et al., Causal Abstraction: A Theoretical Foundation for Mechanistic Interpretability

- LessWrong, Understanding LLMs: Insights from Mechanistic Interpretability

- Dario Amodei, The Urgency of Interpretability

- Google DeepMind has a new way to look inside an AI’s “mind” (re: GemmaScope)

- Nanda, Mechanistic Interpretability Quickstart Guide

- […more from ~July 2024…]

- Some useful books:

- Andrew Trask, Grokking Deep Learning — a shockingly good book that provides insights on almost every page into what neural networks are doing, what the weights “mean”, how the weights get improved during error correction and back-propagation; also see Luis Serrano, Grokking Machine Learning, which nicely hammers over and over into one’s head how different ML methods predict/classify/cluster using a basic “find the best-possible function/trendline by slowly reducing error” loop

- Alammar & Grootendorst, Hands-on Large Language Models — this is my current favorite book on LLMs, including all-important embedding and word vectors; attention and Transformer operation; prompt engineering (how the heck does just typing some English prose semi-reliably generate what you want?!)

- Sebastian Raschka, Build a Large Language Model from Scratch — what the title says; detailed walk-through of Python LLM code that uses PyTorch, but not higher-level tools like TensorFlow or Keras (for which you should and must see Francois Chollet, Deep Learning with Python). See also Raschka’s forthcoming Build a Reasoning Model from Scratch

- Christoph Molnar, Interpretable Machine Learning: A Guide for Making Black Box Models Explainable (see also Supervised machine learning for science: How to stop worrying and love your black box)

- Christoph Molnar, Interpreting Machine Learning Models with SHAP

- Thampi, Interpretable AI

- Munn & Pitman, Explainable AI for Practitioners

- Harrison, Machine Learning Pocket Reference (includes SHAP, LIME, PCA [Principal Component Analysis], UMAP, t-SNE, etc.)

- See Fiddler.ai